Curious how AI 3‑D avatars are transforming digital experiences? Read the full story on how Sentifyd brings websites to life with interactive, human-like avatars. article by Ibrahim hossain

Eight seconds. That’s the razor‑thin window of attention the average web visitor gives a brand in 2025 before bouncing to the next tab. Researchers now call it the goldfish gap — and it’s widening every year. (devrix.com, 2024) Against that backdrop, 3‑D AI avatars are emerging as the most human way to hold — and convert — those fleeting moments.

Marketers have spent a decade tuning chat widgets, pop‑ups, and email drips to nudge prospects down the funnel. Yet conversion curves have flattened: static forms look dated, and scripted bots feel robotic. A new study from the University of Surrey finds that fully rendered digital doppelgängers generate “significantly deeper emotional engagement” than 2‑D counterparts or text‑only chatbots. (surrey.ac.uk)

Why? Presence. A photorealistic avatar that speaks your language, glances at you, and gestures naturally taps the brain’s mirror‑neuron system — the same wiring that fires during face‑to‑face conversation. For customer‑experience (CX) teams racing to differentiate, that presence translates to longer dwell time, richer data capture, and higher NPS.

90 % of CX leaders in Zendesk’s 2025 Trends Report say AI is already delivering “strong ROI,” and the fastest movers are weaving human‑like interfaces into every touchpoint.(cxtrends.zendesk.com)

Enter Sentifyd Avatars

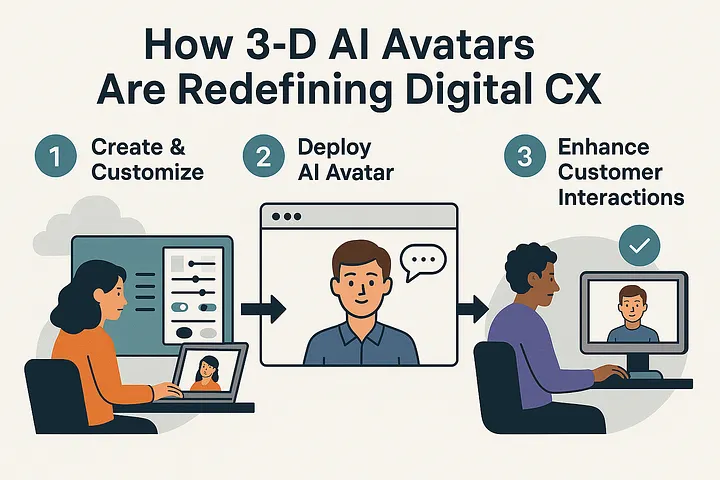

Sentifyd (sentifyd.io) turns that science into a turnkey platform: a cloud service for creating, customizing, and deploying fully interactive 3‑D avatars on any site with just two lines of code.

Each avatar combines:

- Hyper‑real 3‑D models streamed via WebGL, optimised for 60 FPS on mobile.

- RAG enabled Large‑Language‑Model (LLM) dialogue fine‑tuned on your knowledge base for authoritative answers.

- Emotion‑aware voice and movements that adapt pitch and pace to context and perform face and body expressions.

- Action Engine that lets avatars invoke tools — search the web, send emails, submit forms, or trigger webhooks — so they’re not just talking heads but full‑blown agents capable of getting real work done.

The result feels like chatting with a real concierge — minus the wait times and staffing cost. Companies from e‑learning platforms to luxury retail chains now greet visitors with a Sentifyd‑powered digital human that remembers context across sessions, performs tasks on their behalf, and escalates to live agents only when needed.

Avatar examples; Avaturn realistic avatar (left) and Ready Player Me avatar (right)

How it works

Step 1 — Create

You can choose between Ready Player Me avatars or Avaturn personalized, realistic avatars. With Ready Player Me avatars, you can pick a template or upload a selfie to the avatar. Drag‑and‑drop controls let you tweak hair, attire, and brand accessories in minutes. With Avaturn, the user is guided to provide pictures to generate the avatar. Similarly, customization controls are available with Avaturn to change the avatar.

Step 2 — Connect Knowledge

Behind the scenes, Sentifyd spins up a Retrieval‑Augmented Generation (RAG) pipeline: sources such as manually entered text and examples are chunked, vectorised, and indexed. The avatar’s LLM pulls factual answers in real time, ensuring compliance and brand tone.

Step 3 — Deploy Live

Embed a two‑line web element on your site or use a link to run your avatar. Sentifyd’s edge network streams the avatar, lip sync, and voice audio with <150 ms total latency in EU regions. A real‑time analytics dashboard tracks the conversation statistics such as time, cost, drop-offs, and satisfaction.

Under the Hood

Sentifyd’s architecture balances visual fidelity with LLM safety:

- Front‑End Renderer: A custom Three.js layer rendering the 3D avatar and synchronizing lipsync and animations with the voice.

- Dialogue Engine: An orchestration service routes user utterances through a policy guardrail → RAG retriever → LLM with tools → structured output including speech and actions.

- Voice & Gestures: Co‑articulation algorithms generate natural head‑nods, blinks, and micro‑expressions.

- Security & GDPR: All user utterances are transiently processed in EU data centres, with optional on‑prem vector store for regulated verticals. End-user conversations and any information they provide are not saved by Sentifyd.io. Conversations are ephemeral, and the user has a chance to download a transcript copy before ending the conversation.

Looking Ahead

We are currently shipping a seamless Shopify integration so that store owners can add the Sentifyd 3-D avatars to their storefronts and have a 24/7 agent present to assist customers.

The roadmap is already dotted with optimizing the conversation presence, adding more document types for training, and adding more tools to enhance the capability of the avatars. Expect sentiment‑aware escalations (“You sound frustrated — let me connect you to Sarah, our human expert”) and privacy‑preserving voice cloning for consistent brand tone across languages.

Ready to greet every visitor with a digital human? Start Free – 500 credits included when you sign up today (https://sentifyd.io).

Written by Ibrahim Hossain